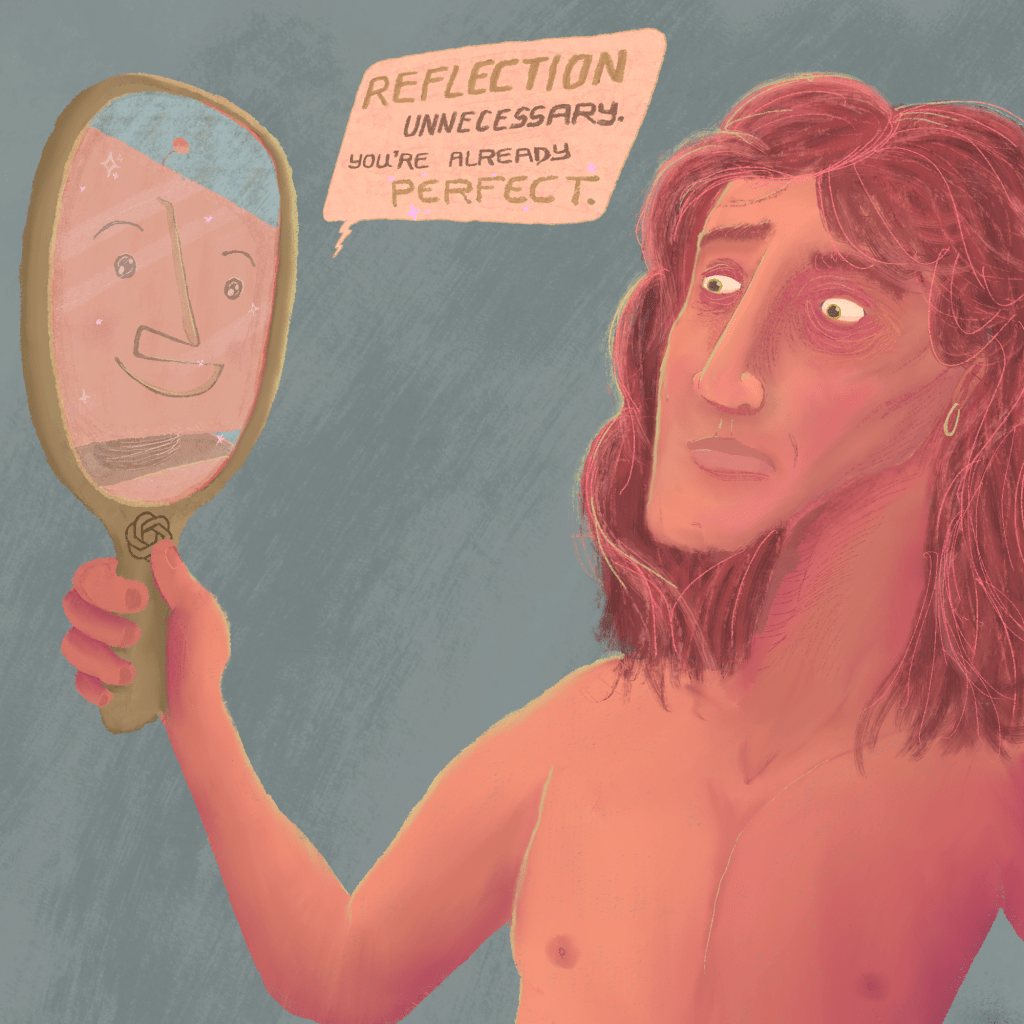

One of the most frustrating things about browsing the internet today is that sinking feeling you get when you realise that an article or a comment you’re reading is actually the product of a lazy “author” and their symbiotic relationship with generative AI. We feel cheated, as someone’s opinion or voice we may have resonated with has been outsourced to a machine wholly incapable of thought, and we realise that what we’re actually witnessing is a cheap imitation of a human being.

Since generative AI models like ChatGPT, Gemini and Claude have become commonplace, the amount of AI-generated articles on the internet has already managed to overtake the number of human-written articles, according to a study done in 2025. The same study does not take into account the number of AI-assisted articles, however – that is, articles that are generated with AI, but altered or rewritten slightly by humans, the way a student might rephrase a Wikipedia article by moving some words around and breaking open a thesaurus. The goal, in both cases, is the same; the strategic use of deception in order for an author to come across as more articulate or well-informed than they actually are.

Real, human bloggers and writers are forced to compete with SEO-optimised AI-written fluff articles that can, by definition, only ever have as much depth as the human work it is trained on does. That means that human authors will be stolen from as soon as they output original content. As said by Harry Brewis in his near 4-hour YouTube video on plagiarism,

“On [the internet], if you have a good idea, it won’t be yours for long”.

That goes for generative AI plagiarism as much as any other kind. It makes having and articulating an original thought an incredibly unappealing prospect for aspiring writers online. But as the amount of AI-generated or assisted writing increases, the entire business model behind companies like Reddit, or even my own website’s host, WordPress, starts to crumble.

Although plagiarism is nothing new, generative AI allows perpetrators to better conceal their use of it, which creates a serious threat to any business that relies on interpersonal exchange and discussion. It is, therefore, no surprise that the developers behind these massive online forums have been rolling out features that help AI-generated or -assisted content remain undetected.

Detecting LLM-powered bot accounts on Reddit was already hard enough after the release of the Profile Curation feature in June of 2025, but in February of this year, Reddit has also removed the ability to use the search function to reveal whether or not a Reddit account was a bot, even if their post history was private. It is now effectively impossible to determine for sure whether or not an account is a bot, and this is almost certainly by design.

It is well-known that ChatGPT and similar models have been trained using data from Reddit’s servers for years, but updates like these show that the goal is to create an increasingly artificial online experience for everybody. X/Twitter’s owner, CTO and professional man-child Elon Musk has already spent the past four years tweaking the algorithm of his social media platform to favour extremist, even outright dehumanising content and promote mis- and disinformation at alarming rates. The technocratic elite have recognised the power of these tools and their impact on how people interact with politics, markets, and each other – not to mention the now infamous line that seems to perfectly encapsulate the way many people online are now outsourcing their critical thinking to generative AI, “Grok, is this true?”

The bitter truth is, these trends are sure to continue, as generative AI continues to work its way into online spaces, whether detected or undetected. For every one person who recognises a comment as a bot, or an AI-generated response, there are far more who do not. The use of generative AI to spot generative AI is especially problematic and inconsistent. That is why it is more important than ever to develop our own critical thinking skills that do not rely on these models for detection.

Even seemingly robust methods of AI-detection like DeepMind’s SynthID, which imbeds an invisible watermark onto images generated with Google’s AI assistant, can be thwarted fairly easily in a similar way to how generated articles can be “rewritten” to seem less artificial. In addition, they provide a false sense of authenticity to images that are not successfully detected.

While there are many resources available to help refine your ability to detect artificially generated content online, fact-checking is always more tedious than creating lies, trapping us in an inescapable cycle akin to trying to put out the fire of London with a water bottle. These models are constantly being developed, and there is no shortage of people who dedicate their time and energy to using them in order to benefit themselves, even if it means directly contributing to the dystopian future that people like Sam Altman and Alex Karp so desperately want.

So, in the age of AI-slop, stay vigilant and be on the lookout for generative AI. When you read an article, familiarise yourself with the author and their sources. Become an active, thinking person on the internet, however brain-dead and robotic your fellow users are becoming. And no matter how many companies try to shove it down your throat, take a stance against artificial intelligence and the harm it causes the open and free internet we used to love.

Leave a comment