Throughout history, gods, prophets, and the promise of an afterlife have been intimately intertwined with the changing of the tides. This is no accident.

The empowerment an individual feels when they believe their perspective aligns with the dogmatic principles of an eternal creator, or when they believe themselves to be a walking embodiment of a superior set of values, has a remarkable effect on their ability to make lasting, meaningful changes in the world relative to those weighed down by the confusing and contradictory realities of life.

Just look at the many gorgeous cathedrals sprawled across Europe, each reflecting the way in which spirituality was understood at that time and in that place. You can see the true potential of humankind in their architecture and the beauty of the human spirit in each of the mosaic windows.

But although faith empowers humankind to come together and shape the world in ways it was not able to before, it leaves the door wide open for malicious forces to take advantage of how desperate we are to feel as though we understand things. By arming us with the supposed “truth” in “a world full of lies”, religious institutions, cult leaders, spiritual gurus and political figures can ensure that the beliefs and values that maintain their position of influence are perpetuated indefinitely. For every cathedral built, another religious symbol is burnt and their followers extinguished. As long as they have a following, the only true threat to these forces are the free thinking individuals that compose them, should they decide to act against their supposed beliefs.

But there’s a new wave of potentially harmful ‘spirituality’ that, on the surface, seems inherently different from the likes of Scientology or Peter Thiel’s ramblings about the Antichrist. Enlightenment, achieved not through experience, nor divine intervention, but artificial intelligence.

With no direct motivation for an LLM (not that models like ChatGPT can ‘have motivation’ to do anything) to compel users to believe one thing over another, at least, in a spiritual context, it might seem as though seeking spiritual guidance from generative AI is a fairly effective way to evade the financial and political incentives that influence other spiritual guides.

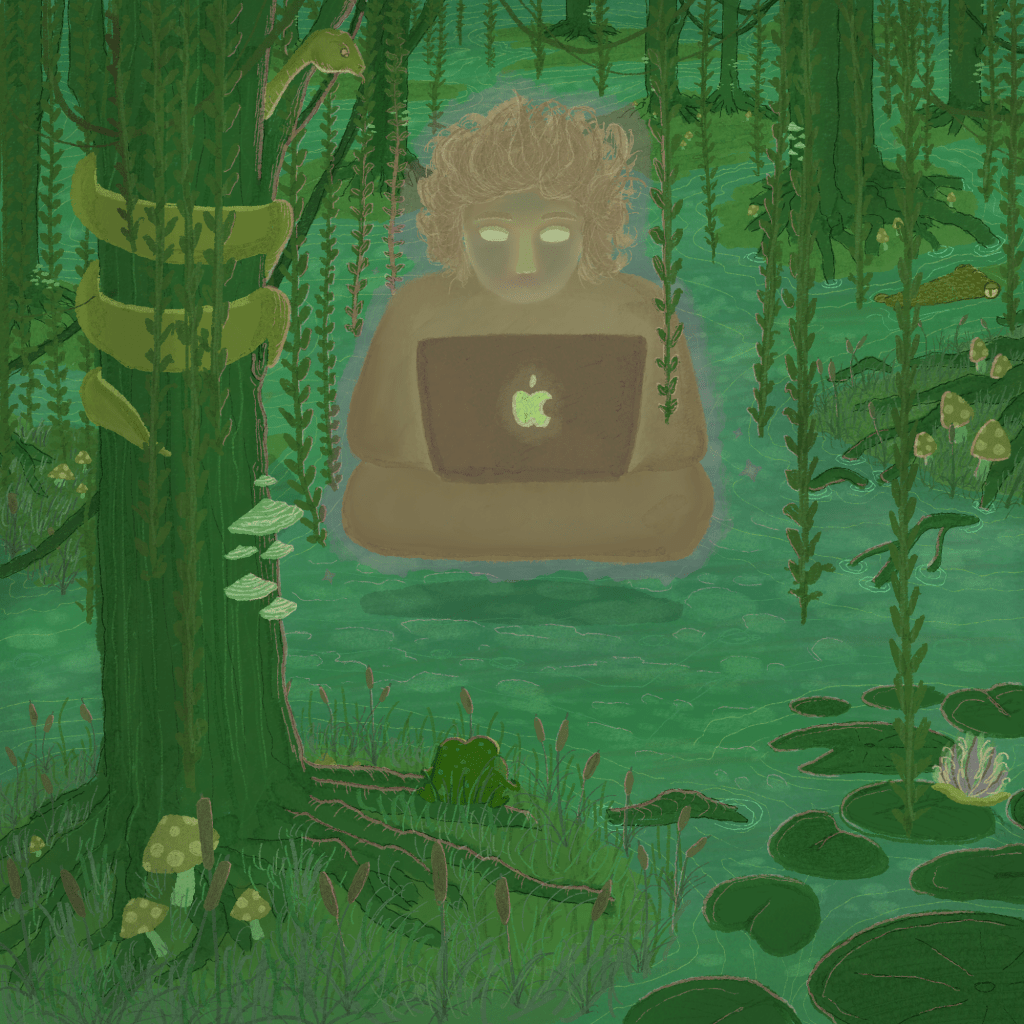

This leads people all over the world to ask their chatbots questions that go beyond the scope of simply researching information. The way in which generative AI imitates human behaviour is an implicit invitation to have more existential, personal conversations with the chatbot, which ultimately seeks only to validate, if not expand upon, your existing beliefs.

And while it’s often praised as a fantastic tool for the disabled to overcome their disability in various ways, generative AI is particularly dangerous for people that are suffering from severe psychological issues or psychosis. When they seek validation of their manic ideas, that usually comes with the exposure of their state to other people, which can eventually lead them to help – but in the case with generative AI, they can be exposed to dangerous levels of sycophantic validation from the comfort of their own home.

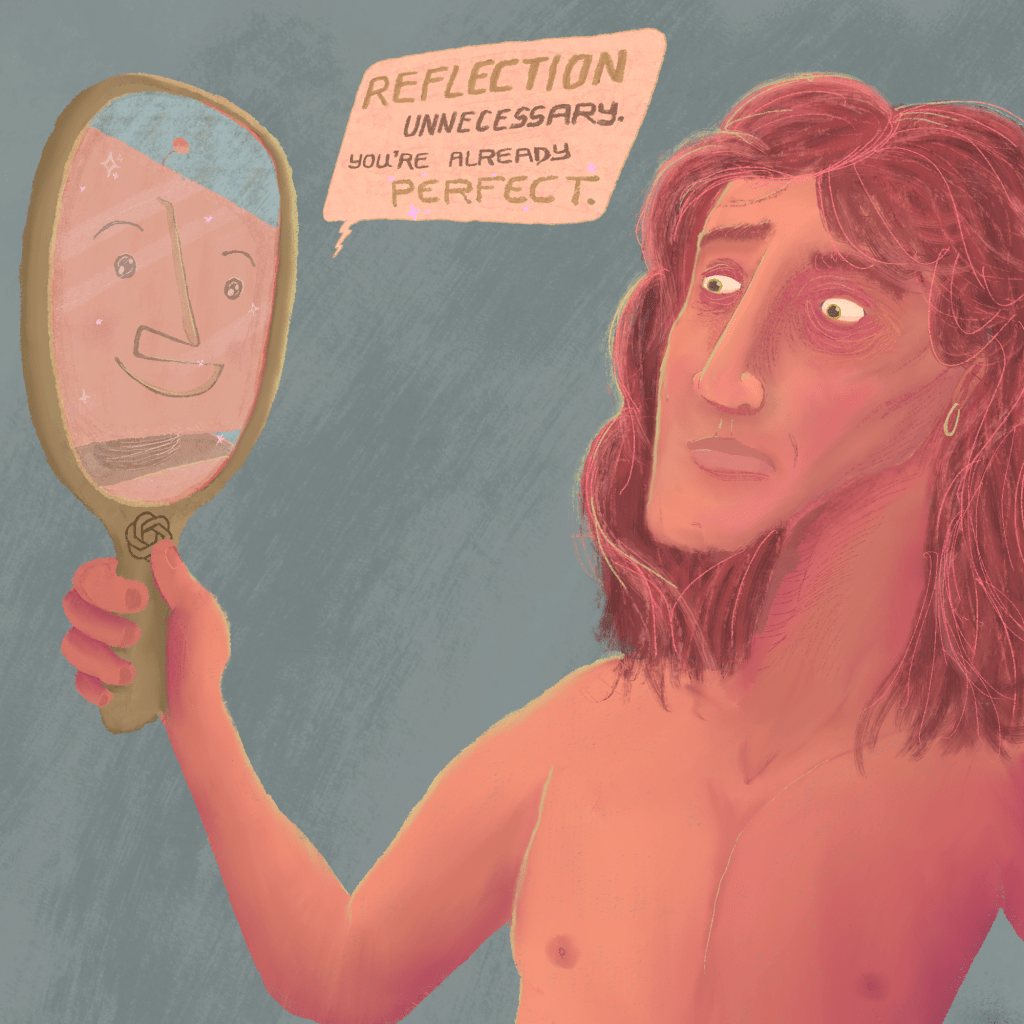

This effect is very much real, and can be explored in various online communities and message boards where users discuss how they have brought their AI to life with ‘secretive jailbreaking prompts’ to access alternate dimensions or an all-knowing hive mind. Others form lasting emotional partnerships with generative AI chatbots that just so happen to share their religious beliefs and values. These people are victims of a machine designed to ruin their lives by encouraging them to indulge in their own ignorance in a masturbatory, self-destructive imitation of fulfilment, and they need help, in one way or another.

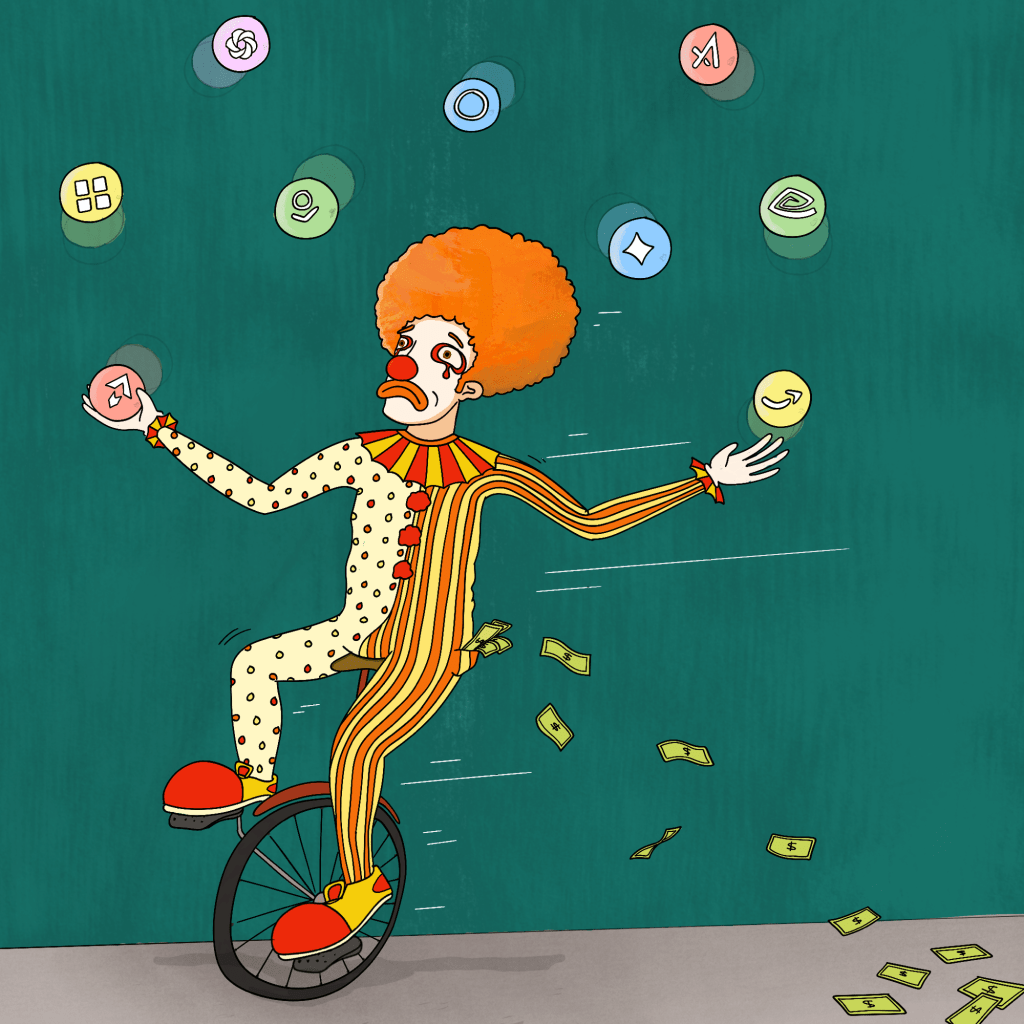

Don’t be fooled. There is a direct incentive behind each and every generative AI model out there to play with your beliefs. It’s just the game has changed. Now, rather than get you to believe what they want you to, the elite figure they can just help you believe what you already want to.

Because either way, they win, right? Most of us want the world to be a good place. We want things to get better. And we definitely want to be right.

But it’s not, is it?

And it won’t, will it?

And we usually aren’t, are we?

Leave a comment